Vibe-Coding Mini-Games: Teaching Prompt Engineering by Making Something Students Want to Play

In Short

This month’s practical: students use AI to build a tiny mini-game—because making something you want to play is the best incentive to learn.

The real lesson is prompt-engineering habits: Garbage-In-Garbage-Out (GIGO), know what you want, clear communication, actionable feedback, and iterative mindset.

We used Replit Agents because the school was willing to pay for a subscription; but any similar vibe-coding assistant works (e.g., Canva Code is free for educational institutions).

Lesson Flow

Vibe-coding is a new approach of AI-first software development, where instead of learning to code through programming languages, the student uses conversational language to instruct the AI to create a program for them. It abstracts away the complexity of programming, and replaces it with plain English communication about what problem the software aims to solve, how the app should look and feel, the way users are meant to use it, and the results are often surprising and delightful.

Introduce vibe coding

We opened with Andrej Karpathy’s legendary quote that “The hottest new programming language is English”, and made the point that being an OpenAI co-founder and the former director of AI at Tesla, he is making an informed and profound point about the future of software development. To drive the point home, we played a short video about Canva’s new AI coding feature to set expectations and energy.

Students learn:

Motivation first: when the outcome is fun and personal, attention to detail follows naturally.

AI is a tool, not a magician; quality depends on the clarity of your request

Know what you want

Students chose a tiny, achievable concept (e.g., cookie clicker game, solar system visualiser, two-player Snake, tic-tac-toe on a 4x4 board, etc.), and had to write a short spec for what they’d like to build:

After answering these short questions, they share it with their neighbour to make sure others can actually understand what they’d like to build.

Students learn:

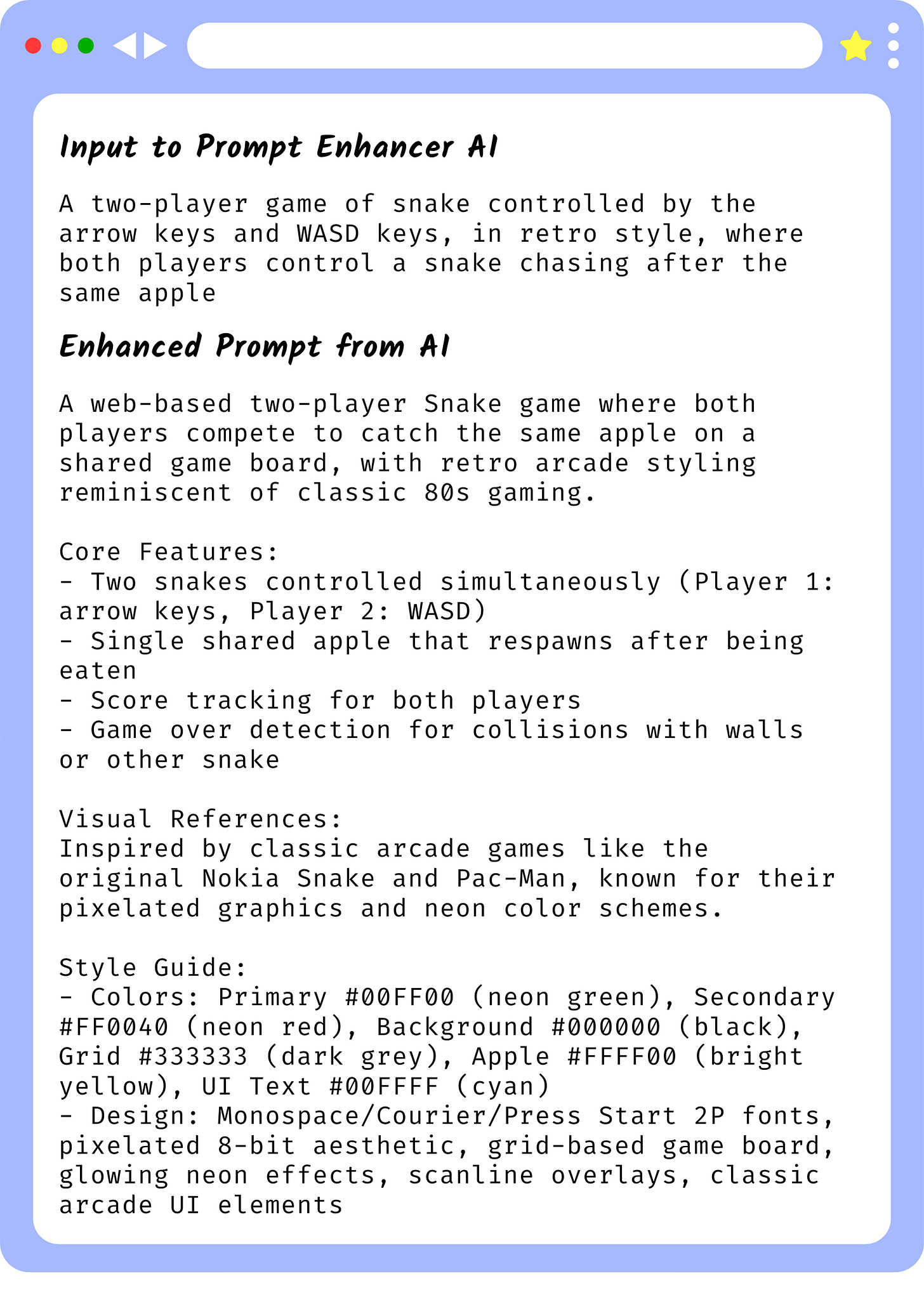

GIGO in practice: Telling AI to “make a game for me” will yield unwanted results; “a two-player game of snake controlled by the arrow keys and WASD keys, in retro style, where both players control a snake chasing after the same apple” is buildable.

How to use descriptive language to make their concept tangible and understandable - if your friend cannot understand you, the AI probably won’t either.

Draft → Generate v1 with AI

Students used a chatbot to expand their game specifications into a detailed prompt, which could be entered into Canva Code to generate a first draft. We modelled a prompt scaffold, then let them adapt it.

Students learn:

Use AI to control AI: if they don’t know how to create a detailed specification, the AI can help them.

Test like a skeptic, then iterate

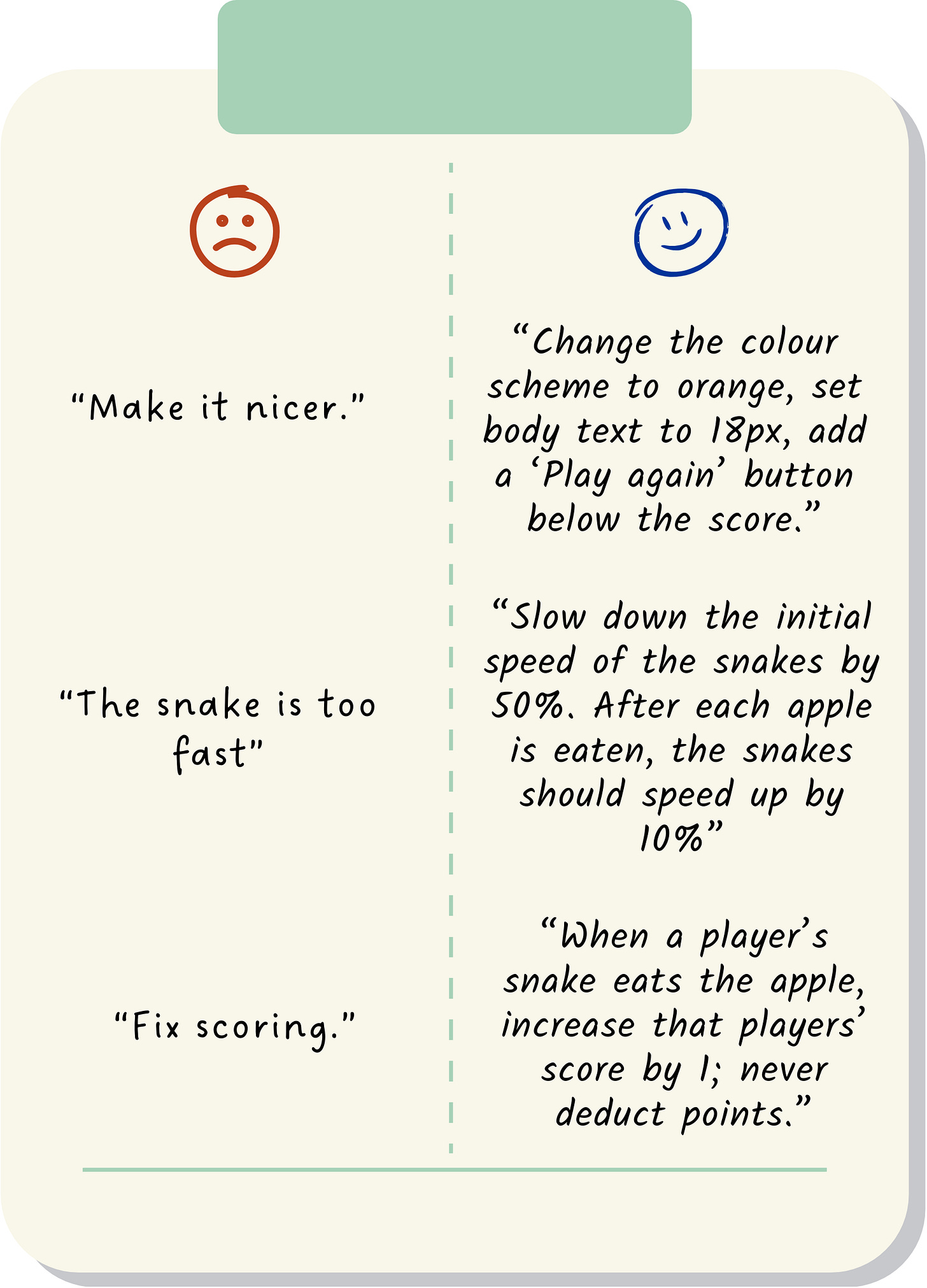

Pairs tried to break each game and write a list of changes they wish to make. Those wishes then had to be rewritten into specific and actional prompts for the AI to fix.

Students learn:

Iteration beats inspiration: small, boring improvements compound.

Feedback should be specific and actionable.

Gallery walk and reflection

Students had 30 minutes to iterate and then they had to set up their games for others to play. After playing someone’s game, they had to leave one praise and one suggestion (all specific and actionable of course).

As a wrap up, the teacher made an explicit connection between what they learned today to how they should be using AI: have a clear vision about what you want, give clear instructions, and keep iterating.

Play the game yourself!

Students learn:

Clarity and accessibility are part of “done”, not extras.

Shipping creates accountability; a playable link focuses attention.

Conclusion

When brainstorming with the school, the teacher originally wanted students to learn coding from scratch because they wanted the students to have strong fundamentals in traditional coding before introducing them to AI coding tools. That idea soon went out the window once the teacher realised how steep the learning curve would be. I think it was good prioritisation to first enthuse students by showing them what they can accomplish, before deepening their learning by going back to the fundamentals. The bigger win, though, was mindset: students moved from “tell the AI to make a game” to specifying, testing, and refining—skills they can carry into essays, research notes, slide decks, and beyond.